By 2026, the AI toy category has exploded from a niche segment into one of the top-selling items during major shopping seasons. Smart plush toys have evolved far beyond simple "storytelling devices" into true emotional companions capable of understanding feelings, maintaining long-term memory, and delivering natural, continuous interaction. The ESP32-S3 has become the go-to main controller for premium emotional companion plush toys thanks to its built-in AI acceleration, Wi-Fi 6 connectivity, and excellent edge-computing performance within a constrained power and size budget.

This article explains - from a purely technical perspective - how ESP32-S3 is architected into modern AI plush toys, covering hardware foundation, ultra-low-power management, multi-modal wake-up, voice pipeline, long-term memory implementation, privacy-by-design principles, and structural considerations.

Why ESP32-S3 Is the Preferred SoC for Emotional AI Plush Toys

Dual-core Xtensa LX7 + AI vector instructions → efficient execution of lightweight wake-word models and local caching logic

Wi-Fi 6 with low-power modes → ideal for "edge + cloud" hybrid architecture

Generous on-chip SRAM + support for external 8 MB SPI Flash → sufficient space for caching recent memories locally (reducing cloud round-trips)

Native support for dual digital microphones → good noise suppression for far-field pickup in a plush environment

Easy interfacing with low-power 3-axis gyroscopes (SLSC7A20, QMI8658, etc.) → enables physical "shake-to-talk" interaction

3.7 V lithium battery domain with NTC monitoring → mature and safe power solution

These characteristics allow developers to achieve simultaneously: months-long standby, real-time voice interaction, sensor fusion, and secure memory persistence - all inside a child-safe plush form factor.

Achieving μA-Level Deep Sleep & 90+ Days Standby

The single biggest user complaint in AI toys is poor battery life. ESP32-S3 addresses this through carefully designed power states:

| State | CPU | Wi-Fi | Peripherals | Target Current |

|---|---|---|---|---|

| Deep Sleep | Suspended | Off | Only wake-up interrupts | μA level |

| Standby | Low freq | Off | Sensors on hold | < 100 μA |

| Network Connected | Normal | Connected | Sensors idle | < 10 mA |

| Active Dialogue | Full speed | Active | Full peripherals | 50–300 mA |

Key mechanisms:

Voltage checked every 60 seconds; <3.5 V → voice warning; <3.4 V → forced shutdown with voice prompt

When Type-C is plugged in → AI functions disabled instantly, enters pure charging mode

After 10 minutes of inactivity (no voice / button / shake) → deep sleep

Result: realistic "charge once a month" experience for end users

Triple Wake-up: Voice + Button + Shake - Solving "Hard to Wake" Pain Point

ESP32-S3's low-power wake sources are leveraged to create three natural activation paths:

Voice wake-up

Lightweight local model running continuously

Custom wake word support (e.g. "Hey Teddy")

Target: >95% success rate within 2 m in quiet environment

End-to-end latency usually ~500 ms, max <1.5 s

Button wake-up

Short press power button → starts dialogue

During playback → short press interrupts speech and starts new recording

Shake wake-up (most differentiating)

Gyroscope threshold: angular velocity >20°/s for ≥0.5 s

Filters out casual table vibrations / accidental touches

Mimics real-life "shake me when you want to talk" interaction

This multi-modal approach dramatically lowers the activation barrier, especially for young children.

Clean Status Indication with Single Blue LED

One blue LED communicates state unambiguously:

Booting / Wi-Fi connecting → slow blink (0.5 Hz)

Idle & connected → steady on

Listening or speaking → fast blink (5 Hz)

Deep sleep → off

Standard dialogue flow: Wake → "ding" chime → record (VAD endpoint detection) → cloud inference → playback (interruptible at any time)

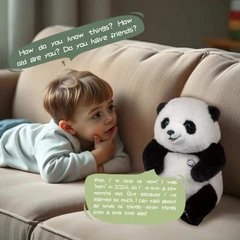

Long-Term Memory - The Core Differentiator

Long-term memory transforms the toy from "stranger every time" into a growing friend.

Architecture

Cloud database (primary storage) + local 8 MB Flash cache (recent & frequent items)

After each user utterance → cloud model decides whether new information is worth remembering

Only high-confidence explicit statements are permanently stored (e.g. "My cat's name is Mimi")

Ambiguous / low-confidence items kept temporarily and decay

Conflict resolution: prompt user for confirmation when contradiction detected

Typical UX example

Day 1: "My name is Tim and I'm 5 years old." → Toy: "Nice to meet you, Tim! I'll remember you're 5."

Day 2 morning: User says "Good morning!" → Toy: "Good morning Tim! You said you like dinosaurs yesterday - want a dinosaur adventure story today?"

Target recall accuracy: >90% in cross-session validation.

Voice Pipeline & Child-Safety Filters

Local wake-word engine + VAD (voice activity detection) for accurate endpointing

Playback interruption: microphone stays active during TTS; wake-word detected → instant stop & re-record

Cloud responses pass multi-layer content filters (sensitive words, violence, etc.)

Anti-addiction logic: per-session time cap, nighttime disable option

Dialect & slang tested extensively to reduce misrecognition

Privacy & Security by Design (Strategic Priority)

Local-first: wake-word + basic commands processed on-device

All cloud uploads encrypted (HTTPS)

Memory strongly tied to user account - lost device does not leak data

One-click memory wipe & export supported

Parent dashboard: view summaries, toggle memory, set time limits

These measures directly address the industry's biggest trust pain points.

Mechanical & Acoustic Integration Considerations

Speaker: independent sealed back cavity required for 85 dB @ 50 cm (3 W, 4 Ω)

Microphones positioned away from speaker airflow path

Gyro sensitivity parameter tunable per plush stuffing density

Button travel: fabric → guide post → microswitch (clear tactile feedback)

Basic IPX2 splash resistance recommended

Thermal path: keep ESP32-S3 away from direct battery contact